Recently we were brought in as part of the search team for DukeMedicine’s new portal to resolve crucial search quality issues. As beautiful and functional as the site is, it wasn’t until late in the project that the team realized that search had a serious problem. Search results were not at all relevant to the Duke’s patient searches. Duke’s patients use a single search box to find all kinds of content. They might need to search for “dutch doctor” to find a Dutch speaking doctor. Or they might search for a liver specialist near them by typing in “liver doctor Durham”. Search results were not living up to anyone’s expectations.

Even though everything was in the Solr index, users of the website were having a really difficult time finding anything they were looking for. You cant expect the average end user to know how to craft complex queries by specifying boosts manually for fields that they have no idea about. Users expect smart google-like search that carefully provide tuned search results by automatically searching across multiple fields.

However, isnt it a real pain to craft a `one-size fits all search box that balances search relevancy across all use cases? It used to be. Enter Quepid!

Day one on this project, Duke had provided us with a spreadsheet of search queries and what the expected results should be. The classic approach is to tweak search queries around to improve specific cases, but as you get deeper into the pile you start crossing your fingers that changes youre making arent affecting other searches. You could manually search every important entry in your spreadsheet. However, its simply not feasible to do this. Search testing falls by the wayside. If you do this enough, eventually your brain should get annoyed and say “This can be done more efficiently, right?” And thankfully, it can with Quepid.

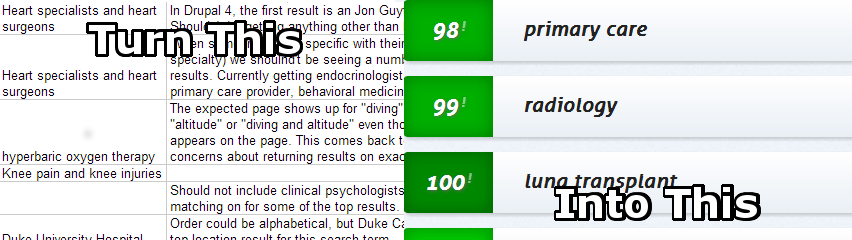

Quepid can turn confusing spreadsheets into an intuitive interface.

On the DukeHealth project, we had a handful of high level concepts consisting of:

- Common Sense Criteria – A search for diabetes should bring up results for diabetes.

- Search over Multiple Content Types – Searches had to be relevant across doctors, office locations, treatment information, etc.

- Prioritization of Content – Site structure and hierarchy should affect order of search results. Searching for the broad term cancer should bring up the Cancer Center at Duke page as the top result rather than a lower-level cancer page.

- Doctor Specifics – Doctors needed to be searchable by their specialties.

- Phrase Matching – Searching for “cancer treatments” should yield more results for “cancer” than “treatments”.

- Synonyms – A query for “afib” should return matches on atrial fibrillation.

- Languages – Queries for “dutch doctor” should return doctors that speak Dutch higher than others.

- Locations – Searches should return results closest to a location even if they don’t match exactly.

- Treatments – If no match is found for a specific treatment, a higher level result that includes the treatment should be returned.

We were able to enter these as cases into Quepid and add multiple test queries for each. From there we could apply our geeky Solr expertise. We added synonyms and applied boosts to certain fields. We altered how the query is parsed and text analyzed. With each change we could immediately see how it affected all of our cases– instantly seeing how search changes help or hurt all searches. Sometimes we nailed it and everything stayed great, other times a change would fix one case and break others, and, because it happens, sometimes our change was way off and didnt even help what we were trying to fix. The key point is that Quepid gave us instant visual feedback on our changes. No time was wasted running queries manually to evaluate results.

With the help of Quepid, we achieved fantastic relevancy in just a few weeks. Its a good feeling when you hear the user’s initial reaction to the search “What the #$%# is this?!?” changed to “Wow! This is really great.” With Quepid, we were able to show off how bad relevancy was using the old configuration and then demonstrate the improvements with our customizations. Our clients were able to visually grasp how our changes affected their system as we iterated on search quality.

So congratulations to DukeMedicine for the recent launch. We were thrilled to be part of the team! And of course, if youre working with any Solr tuning projects, consider checking out Quepid because it will make your life a whole lot easier. If you want to make your life even easier, Contact Us! Bring in the experts for our Quepid relevancy health checks.