Does your team use AWS OpenSearch but struggle to deliver relevant, high-quality search results?

‘Think Like a Relevance Engineer’ (TLRE) is a 2-part OpenSearch training that gives your team the skills you need to tackle search relevance issues. This OpenSearch class helps search teams understand how to measure search quality, iterate on relevance against those quality metrics, with a survey of the common techniques used to improve relevance: from basic TF*IDF, to taxonomies, to learning to rank. TLRE is delivered online using a leading videoconferencing platform, including the facility to carry out exercises and labs. Using Solr instead? There’s also a class for that!

Who we are

OpenSource Connections believes in empowering search teams. OpenSource Connections has worked with open source search and applied Information Retrieval since 2007. We wrote the book Relevant Search and have pioneered open source relevance tuning tools like the Elasticsearch Learning to Rank plugin, Quepid and Splainer.

Your trainer

Your OpenSource Connections OpenSearch trainer is a relevance thought-leader actively working on real-life relevance issues. OpenSearch training is core to our mission of ‘empowering search teams’, so you get our best and brightest. We never send a trainer to just “read off slides”. We expect you to bring your hardest questions to our trainers. Our trainers expect to be challenged, and know how to handle unique twists on problems they’ve seen before.

Two ways to take training

Private training

Private trainings can be delivered over 2 days in person (EU, UK and USA, we come to your site) or 4 half-days online (worldwide but usually 9am-1pm US Eastern Time). Two expert instructors from OSC will work through the whole course with you, answer any questions you might have and help you with labs and exercises. This option costs from $2000 per seat with a minimum class size of 8 trainees (contact us for pricing, travel expenses for in-person training are not included. We can also provide custom trainings, covering areas outside the standard materials on request).

Self-led using the Moodle learning platform

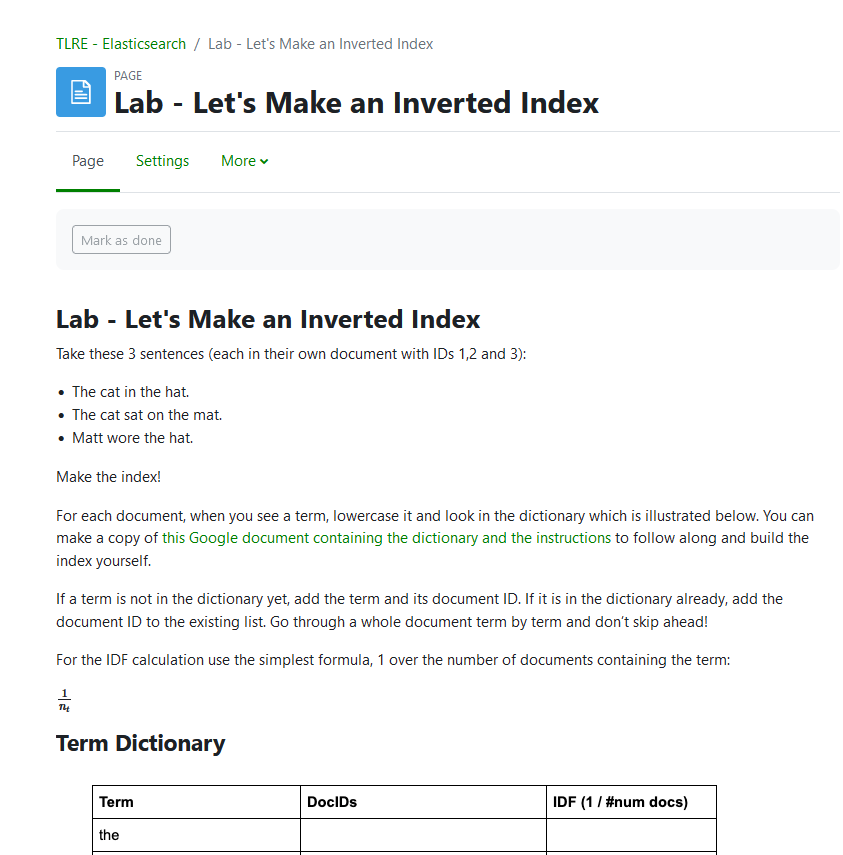

Public TLRE trainings are delivered via the online platform Moodle, costing $750 per seat. This combines prerecorded video from members of the OSC team, slides, labs and quizzes so you make sure you’ve covered all the material. You can see an example lab here.

We estimate that self-led public trainings will take approximately 5 weeks to complete, assuming you spend around 8 hours each week taking the course – but you can take as long as you like!

Here’s a sample of the videos in this new training: Daniel Wrigley talking about Ranking and TF/IDF.

We estimate attendees will need up to 8 hours each week to go through training materials at their own pace. Each week, between 9am-10am US Eastern Time on Wednesday, an OSC instructor will lead an online session where you can ask questions about the training, review the results of labs and exercises. We’ll also invite you and other trainees to a private Slack channel on Relevance Slack.

What you’ll get out of it

How to:

- Measure search quality against business metrics

- Appropriately engage product and technical stakeholders

- Steer your organization to a scientific hypothesis-driven, iterative mindset on relevance

- Select and manipulate high-precision ranking signals in the search engine

- Add semantic intelligence via synonyms and taxonomies

Agenda

Part One – Managing, Measuring, and Testing Search Relevance

This part of the training helps the class understand how working on relevance requires different thinking than other engineering problems. We teach you to measure search quality, take a hypothesis-driven approach to search projects, and safely ‘fail fast’ towards ever improving business KPIs:

- What is search?

- Holding search accountable to the business

- Search quality feedback

- Hypothesis-driven relevance tuning

- User studies for search quality

- Using analytics & clicks to understand search quality

Part Two – Engineering Relevance with OpenSearch

This part of the training demonstrates relevance tuning techniques that actually work. Relevance can’t be achieved by just tweaking field weights: Boosting strategies, synonyms, and semantic search are discussed. The day is closed introducing machine learning for search (a.k.a. “Learning to Rank”):

- Getting a feel for OpenSearch

- Signal Modeling (data modeling for relevance)

- Dealing with multiple, competing objectives in search relevance

- Synonym strategies that actually work

- Introduction to Learning to Rank

Who should come to OpenSearch training?

This training is appropriate for all members of the search team:

- Search Engineers

- Data Engineers that use the search engine

- Machine learning engineers

- Relevance engineers

- Production-focused Data Scientists focused on search

- Product team members wanting exposure to how to manipulate the search engine

Quotes From Past Attendees:

‘Think Like a Relevance Engineer’ has helped me think differently about how I solve relevance problems.

Matt Corkum, Disruptive Technology Director, Elsevier

What a positive experience! We have so many new ideas to implement after attending ‘Think Like a Relevance Engineer’ training.

Andrew Lee, Director of Engineering for Search, DHI

Get Notified about Upcoming Trainings

Daniel Wrigley

Daniel Wrigley  David Fisher

David Fisher  Charlie Hull

Charlie Hull