There’s been some discussion lately on what the behavior of synonyms should be in Solr and Elasticsearch. As you know, this is one of our favorite topics.

At the heart of the problem, a synonym in your ‘synonyms.txt’ isn’t the same as a linguistic synonym. It’s better to think of the search engine’s synonym filter as a relevance engineer’s tool. A way of generating additional terms when another term is encountered. Such a tool can be leveraged to solve a variety of problems, and shouldn’t be used blindly.

From client conversations, what people mean when they say ‘my synonyms are broken’ usually means the search engine’s synonyms don’t match the use case they have in their head. In this article, we’ll enumerate the most common use cases in the field. In future articles, we’ll discuss how to solve each use case using the search engine’s functionality.

An Overview of Common Synonym Use Cases

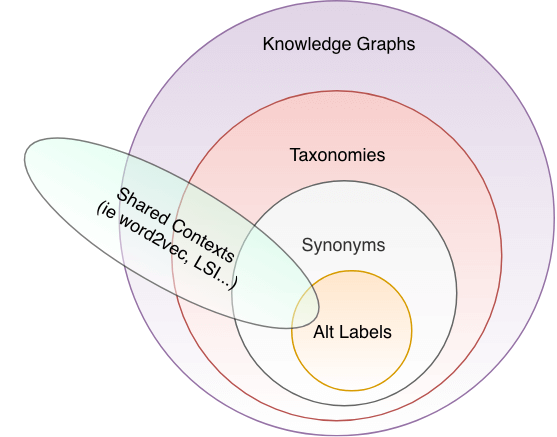

Below is our framework for how we think about synonyms / synonym-like use cases, based on problems clients are trying to solve:

In this article, we’ll define each of these. You’ll notice the nested nature of this diagram. Each inner use case is a simpler version of the outer use case. As you move out, complexity, sophistication, and power increase. The exception are shared contexts (such as with word2vec). As you’ll see terms can share contexts for many reasons unrelated to shared meaning. In these cases, the goal is to munge the data/process to approximate one of the other use cases (such as synonymy).

What are these use cases? In this blog post, we’ll take an inventory of what they are and how they’re used.

Alternate Labels (you say po-ta-to, I say po-tah-to)

Alternate labels are when two terms should be treated as exact equivalent as interchangeable with one-another. Some use cases we see this are:

- Acronyms (

united states,usa) - Weird stems (

bacterium,bacteria) - Alternate spellings (

colour,color) - Common misspellings (

disappear,dissapear)

In these cases, search users see the terms as almost 100% overlapping in meaning. There’s no ambiguity.

It’s important to understand how strict this is. Tagging two terms as true alternate terms should be done with a great deal of care. This isn’t really a ‘synonym’ as you would find in a thesaurus. You’ll see what we mean in the ‘Synonyms’ section below.

Expected Search Engine Scoring of Alternate Terms

In the case of alternate terms, we want terms to receive exactly the same in scoring. A document containing ‘color’ is just as much about the search term ‘colour’. As you might guess, alternate terms is the easiest use case. The default Elasticsearch/Solr synonym functionality (SynonymQuery ) works pretty well for this use case.

Synonyms (in the linguistic sense…)

A synonym is defined by Websters as a word with an identical or nearly the same meaning. Sounds the same as alternate term! In practice though, if you look at a thesaurus, the closeness can be messy and highly contextual. Look at the synonyms for ‘castle’ you see this problem:

château, estate, hacienda, hall, manor, manor house, manse, mansion, palace, villaTo most, ‘palace’ has a different connotation than ‘castle’. Though we humans see them as ‘nearly the same meaning’. ‘Near’ depends on the search corpus, domain, user, and use cases. A historian sees a castle as a defensive structure, synonomous with a fortress. To the historian, this is quite different from a palace, which is a fancy home for nobility. A tourist, however, sees them as nearly the same kind of place to visit. Maybe not exactly the same, but pretty close to interchangeable.

Most people’s Solr and Elasticsearch synonyms files fit into this category. A messy mish-mash of loosely related terms. You might see, for example:

blue jeans,levis,jeans,denim jeans,denimMany search teams throw these terms in, don’t think too deeply about how the search engine should work. Then they’re surprised that the search engine doesn’t prioritize them the way they think they should be prioritized. And sadly, every human being and corpus has subtly different senses of how/where these terms should be treated as more similar or less.

Expected Search Engine Scoring of Synonyms

The situation here is pretty messy. It’s also rather domain and use case specific, but there’s a couple of common themes you see in the academic literature:

- You want the original term to be ranked higher than the synonym terms

- You want synonym terms ranked by some approximation of their ‘closeness’ in the current corpus or user’s perception. Usually based on some measure of how likely the terms truly mean something similar in the corpus, or how likely users seem to indicate they have a similar meaning (such as through watching query refinements).

This fits in with the general field of query expansion. In the query expansion literature, a users search term is expanded and scored relative to its relationship to the original term (via co-occurrence metrics). What I like about this is instead of using word2vec to churn out candidate synonyms, with a lot of data cleaning, this does the opposite. It starts with hypothesized synonyms to seed an exploration, then approximating how they relate via co-occurrence metrics.

Taxonomies

As mentioned in the prior section, search teams will say they want the synonyms ‘closest’ to the user’s query scored higher than the synonyms ‘farthest’ away. A search for ‘dress trousers’ should return literal ‘dress trousers’ matches. But after that, types of dress trousers should be returned (khakis, slacks) followed perhaps by other kinds of ‘trousers’.

Notice I said types of ‘dress trousers’. Of which ‘dress trousers’ themselves are a type of * trousers. Talk to enough search teams about their synonyms and you’ll pick up that they’re really talking about a hierarchy of related terms, NOT a bunch of flat synonyms.

Instead of:

trousers,dress

trousers,khakis,slacksWhat they want to express is better organized as:

\\trousers\formal trousers\khakis

\\trousers\formal trousers\slacksThis is what a taxonomy is: a hierarchy of terms organized by how they fit under broader, less specific categories/terms. Because, if you think a bit more about this, you realize that search queries – heck language in general – has a kind of hierarchy to it. When we don’t have a lot of vocabulary, our words are fairly generic. “Look at those trousers over there”. But eventually as we acquire new expertise and language, we refine into a bit more specificity – “Look at those khakis over there”. Khakis are a more specific type of trousers. We say that trousers are a hypernym of khakis, they have a broader meaning. Conversely, khakis are a hyponym of trousers – khakis are a type of trousers.

We could flesh this taxonomy out a bit further to include informal trousers:

\\trousers\formal-trousers\khakis

\\trousers\formal-trousers\slacks

\\trousers\informal-trousers\jeans

\\trousers\informal-trousers\corduroysDon’t get too hung up on the name of any given node here. It’s best to think of them as concepts. They could easily just have numerical identifiers. The actual terms they correspond to are usually often dealt with in with canonical and alternate terms. Canonical meaning the preferred term for this concept. Alternate terms meaning just what we describe above – words or phrases with interchangable meaning with the main concept.

Very close synonyms in a taxonomy

Often what we see as ‘synonyms’ can be placed somewhere into a good taxonomy. Helping us relate exactly how and where they relate. For example, we might see ‘denim’ as a synonym for jeans, placing both jeans and denim under a broader category.

\\trousers\informal-trousers\denim-trousers\denim

\\trousers\informal-trousers\denim-trousers\jeansAmbiguitiy in Taxonomies

You might also be thinking some of these terms are ambiguous. Corduroys and denim are both also materials, khaki is sometimes a color, which means as terms they might exist under a completely different branch of our taxonomy:

\\colors\brown\khaki

...

\\materials\cotton\denim\\materials\cotton\corduroy

...

\\clothing-items\trousers\formal-trousers\khakis

\\clothing-items\trousers\formal-trousers\slacks

\\clothing-items\trousers\informal-trousers\denimtrousers\denim

\\clothing-items\trousers\informal-trousers\denimtrousers\jeans

\\clothing-items\trousers\informal-trousers\corduroysThis gives search engines opportunities to discover ambiguities and offer users results with different interpretations of a user’s query term.

Finally, whether some text corresponds to a concept in a taxonomy may have little to do with just the text. It may also involve detecting the part of speech, sense, sentiment, or entity of the underlying text being placed in the taxonomy. For example ‘A dog pants’ is not discussing trousers. But ‘A dog’s pants’ is.

Other hierarchies

In addition to hypernymy/hyponomy – which relate terms by broader and narrower meaning – you often see meronomy (aka partonomy). In these cases, the hierarchy expresses ‘part of’ relationships. A feather is a part of a wing which is part of a bird. A dog is part of a pack. There’s an important distinction here, as meronomy doesn’t relate to meaning. But people do place meronyms in hierarchical taxonomies.

Expected Search Engine Scoring of Taxonomies – Nym Ordering

Alessandro Benedetti, Andreas Galvao of Sease defined a useful structure for thinking of scoring taxonomies that they call Nym Ordering. Something like:

- Exact term (lowest doc freq) – jeans

- Alt labels – ‘jean’

- Hyponyms (as in child concepts) – denim, blue jeans, stonewashed jeans, bell-bottom jeans…

- Direct Hypernyms (parent concept) – items labeled just informal trousers

- Cousins (terms with shared parent concept) – other kinds of informal pant (corduroys)

- Grandparent hypernyms – all forms of /trousers (slacks, khakis)

As a relevance engineer, you can decide how deep to get in the nym expansion (go all the way to great-grandparent hypernyms?) Indeed, a lot boils down to how the taxonomy is designed and/or generated – which itself is a skill and a discipline with its own conferences and specialists. I often say, for most search teams, a good taxonomy will have a much bigger impact than Learning to Rank ever will.

We’ll talk more about using a search engine to score taxonomies in a future blog article. For now I recommend my talk, Taxonomical Semantical Magical Search and Max Irwin’s Haystack Talk on Learning Taxonomies for more information.

Knowledge Graphs

What if we want to move past just hierarchies – to allowing search systems to reason about the search query and solve user’s problems? Dogs eat alpo and sometimes sadly squirrels and shoes. These facts might matter if we’re building a search system to help solve common pet problems expressed in the search query “help! My dog swallowed a shoe!”

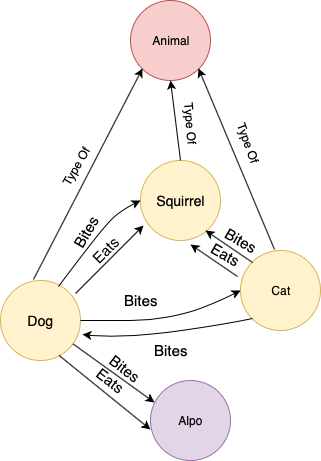

Knowledge graphs are a set of facts. Facts as a graph of relationships between connected concepts, with each node a noun; each connecting edge a relationship (often a verb). For example, we know the fact ‘dogs bite cats’. We can represent that as a list of connections between concepts. Such a triple here would connect concepts ‘dog’ and ‘cat’ with the relationship ‘bites’ as below:

subject, verb, object

dog, bite, catsMore things bite other things:

Cat, bite, squirrel

Cat, bite, dog

Dog, bite, squirrel

Dog, eat, Alpo...We know of other entities that ‘bite’ and ‘eat’ other entities, and can begin to put together a graph that connects concepts together:

(Confusing terminology alert: you’ll often hear such a thing described as an “ontology”. We like “knowledge graph” because that’s very specific to what it is. And there’s some ambiguity: people often use ontology to also mean a taxonomy)

Knowledge graphs can be used to answer questions about the information. If someone asks “what animals do dogs bite?”. We might be able to translate this (using a tool like Mycroft into a structured query that looks like:

- This animal is bitten

- A dog bit it

- -> What is it?

We can use a knowledge graph and enumerate the bitten animals:

- Squirrel, Cat

Knowledge graphs are often stored in a graph database, which are built to support queries like this.

Indeed, Alexa, Siri, and Wolfram Alpha probably at least in part, works like this, armed with knowledge graphs like Wikidata to support these sorts of queries.

We might also have systems that can infer facts for our knowledge graph from our corpus, and bring that into search. Check out Max Irwin’s Haystack Talk on algorithms that allow that. Now that Max is an OSC employee, perhaps he’ll write a blog post in this series touching on ontologies!

A note on Solr’s Knowledge Graph and Elasitcsearch’s Graph Plugin

Just a quick point to clarify confusion. Some have been confused by Elasticsearch’s and Solr’s graph inference capabilities. These aren’t the same as a formal knowledge graph that we describe here (they lack verbs). Instead these are really interesting in their own right: learning what terms are connected based on co-ocurrences. YOU must decide what those co-occurences mean in your data/for your domain and use case.

Expected Search Engine Scoring of Knowledge Graphs

Knowledge graphs represent arbitrary facts that relate to the domain. How they correspond to some search use case is going to be very domain specific. Indeed the search engine probably isn’t using it, probably a graph database is being used to interpret and solve user questions.

These use cases are less about scoring individual documents, but correctly mapping the query into a graph query that can pull back a set of facts from the graph database. This is a slightly different, though very related, problem to search relevance ranking. We’ll discuss this in a future blog post.

Shared Contexts (ie embeddings, word2vec, and the like…)

Tools like word2vec capture statistical rhythms in language that are often the start of discovering relationships between words. Teams often end there, and sidestep the difficulty of thinking about synonymy, taxonomy, knowledge graphs. Can a tool like word2vec eliminate our need to think about the relationships above?

Specifically, many will start their NLP journey by analyzing a corpus for two types of shared contexts:

- Co-occurrences: when two terms tend to occur frequently together in the same sentence, document, or other kind of shared container

- Shared Contexts: when two terms tend to co-occur with the same words

For example, some common co-occurrences for cat and dog might entail

- The vet examined the cat and dog

- I love playing with my cat and dog

Shared contexts connect ‘cat’ and ‘dog’ through the contextual words they occur in

- The vet examined the cat and dog

- Vets love cats and dogs

In the first example, occuring in the same container (doc/sentence/etc) acts as a shared context between ‘cat’ and ‘dog’. In the second example a term (‘vet’) acts as a shared context between cat and dog. If we notice that enough similar words group around ‘cat’ and ‘dog’, we make conclusions that cat and dog are ‘close’ in our corpus: they often share contexts.

Tools like word2vec, Latent Dirichlet Allocation, and Latent Semantic Analysis can compute a per-word representation that encodes the contexts that word occurs in. This representation, a dense vector, is built so that words with similar contexts cluster closely together (their vectors are geometrically close). Words with dissimilar contexts tend to be farther apart. When we represent an item as a dense vector, with the expectation it will cluster together with ‘similar’ things we refer to that vector as an embedding.

These tools are the start of the journey, not the destination. Terms co-occur or share contexts for a variety of reasons, including all of the reasons listed above:

- Alternate Terms: Terms share contexts because they truly are alternate representations of the same term (‘CIA’ and ‘C.I.A’ are both connected through context words ‘intelligence’ and ‘espionage’)

- Synonyms: Terms share contexts because they are synonyms (‘CIA’ and ‘spooks’ are sort of loosely connected through context words ‘intelligence’ and ‘espionage’)

- Hypernym/Hyponym: Terms share contexts because they have a hypernym / hyponym relationship. “The shoe store sold wingtips” vs “The shoe store sold dress shoes. Here dress shoes and wingtips are connected through shared context ‘shoe store’

- Statements of Fact: Terms share contexts because they relate to each other through factual statements (such as described above using a knowledge graph). For example “Dog bites cat” or “Shark bites dog”. Here dog connects the term (a verb) ‘bites’ to ‘shark’. This also might occur a lot with adjectives – ‘red shoe’ and ‘red sky’. Sharing ‘red’ doesn’t mean ‘sky’ and ‘shoe’ are related terms

And other situations we haven’t even discussed yet:

- Antonyms: Certain function words occur in similar contexts, but actually have the opposite meaning. A classic example from chatbots is “I want to confirm my reservation” vs “I want to cancel my reservation”. Cancel/confirm may cluster together due to shared contexts, but are definitely not synonyms!

Using an embedding to emulate any of the use cases we described in this article is possible, but requires work. Indeed it’s very much an open research area! Work we won’t go into here. Many strategies are discussed in the book Deep Learning for Search by Tommaso Teofili and my Neural Search Frontier talk. And also Simon Hughes talk on Vectors in Search for how to use an embedding in a search engine like Solr.

In conclusion… no cut and dry solutions

I want your takeaway to be to know what problem you’re solving before diving into solutioning! There’s no one ‘cut and dry’ solution to this stuff. Indeed, aside from measurement of relevance, this is an area search teams spend a tremendous amount of time working on.

“But DOUG I want an easier answer than ‘it depends…’. Put some skin in the game already!” you say?

OK OK if I must. In many (most?) cases having a good taxonomy is often one of the best assets a search team can have. More important even than jumping on the Learning to Rank bandwagon. Not everyone needs a full knowledge graph, and usually synonyms don’t come with enough richness to express what search teams intend (many people’s ‘synonyms’ are really badly structured taxonomies). Even e-commerce search is about knowledge management! Is there much difference between an attentive, expert sales person and a librarian after all? So whether you intend to hand-craft a taxonomy or learn a taxonomy from your corpus or user queries, ‘Get thee to a Taxonomy!’ as The Bard says.

That’s an instinct-level reaction, and I’m certainly far from knowing everything about this zaney field. Search is a very diverse field that constantly surprises. So it’s important to understand that each tool has its time and place. In future articles, we hope to write about each of these topics in greater depth.

If you’d like to discuss, disagree, or ask questions I hope you’ll join us over at Relevance Slack or Contact us directly.