At OSC, we have a well developed methodology that we apply to our client work, and one of the core tenets is using Continuous Integration to ensure our code behaves the way we intend it to.

However, recently weve had two projects were the usual CI solutions such as CruiseControl etc havent worked out well, and we had to develop our own internal CI tool that we are ready to publish to the world called MonkeyCI.

On the first project, which was a PHP based application with 5 full time developers, we used CruiseControl with the phpUnderControl addon. However, we were running CruiseControl on what turned out to be an underpowered hosted Windows server, and we kept getting build failure errors related to environmental difficulties. Now, if youve seen my talk about CI, you know how big I am on speccing a beefy server for CI, and this experience reinforced that lesson. We decided that migrating the CI environment to a bigger server was something that we felt was in the “nice to have” category, and that it could wait till the next iteration. But we needed something immediate. Enter MonkeyCI.

The heart of CI is all about building the code, running the tests, and publishing the results frequently. Everything else, the reports, the red/green lava lamps, the pretty JavaDocs etc are all gravy. To meet the needs of your developers, you need to know if the “bar is green”. So MonkeyCI does that in a decidedly low tech way:

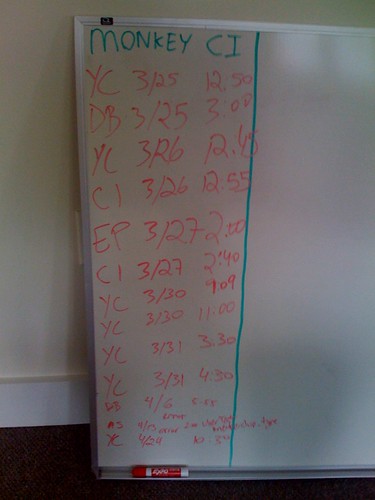

Everytime someone runs the full suite of unit tests they record the day and time, and put their initials. If the build is failing then they immediately fixed it. Weve played with writing the results in red for failing builds and green for successful builds as well. Then, each day at the standup someone highlights how the CI is doing, and verifies that multiple folks are initialing, which means that the tests are running on multiple systems successfully.

While this does mean you have an additional manual process, its also really easy to do, requiring just a whiteboard! And for small project teams, the overhead of maintaining a reliable CI system is too much.

Were doing another two developer project right now, and at least so far MonkeyCI has been great. We havent seen integration issues yet such as database scripts that dont run, or busted code being checked in. Ill post a picture of our whiteboard once we have a bunch of checkoffs recorded!

We call this simple low tech process MonkeyCI because typically we refer to anything manual, such as testing by pounding keyboards as Monkey testing. Also, somewhat of a reference to the great developers at the Primate Programming Institute who I am sure would use this approach to CI!: