My colleague Scott had been bugging me about NiFi for almost a year, and last week I had the privilege of attending an all day training session on Apache NiFi. NiFi (pronounced like wifi), is a powerful system for moving your data around. NiFi attempts to provide a unified framework that makes it simpler to move data from the edge of your systems to your core data centers, and makes your Ops folks the core audience. It offers some unique features to help you feel confident about what is happening to data as it flows through your system, and offers a unique (at least in the FOSS world) visual programming model for moving your data around.

As our instructor, Andrew Psaltis of HortonWorks put it:

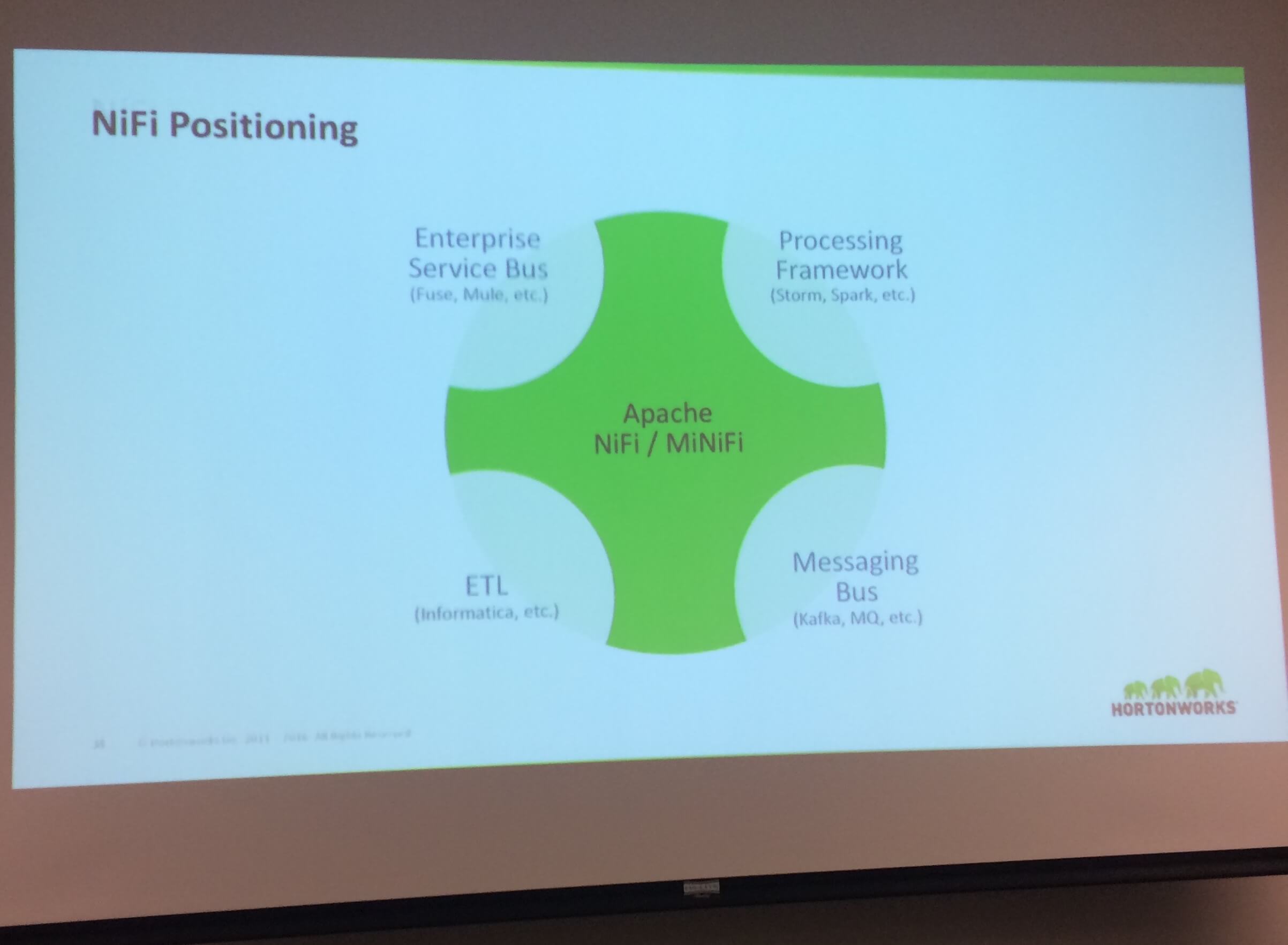

As humans, we want to classify something new into buckets we’re already familiar with. But NiFi doesn’t classify easily.

While NiFi clearly overlaps with systems like Enterprise Service Bus, Processing Frameworks, Messaging Buses, and most clearly ETL, it isn’t just one of them. NiFi instead is trying to pull together a single coherent view of all your data flows, be very robust and fast, and provide enough data manipulation features to be useful in a wide variety of use cases.

Below are some of my impressions based on the day of training I took:

-

Shares many of the best aspects of Camel, but fixes some weaknesses. I really like Camel and we’ve used it a number of times, and the flow of data through steps is very similar. However Camel configuration is very developer centric, “build and deploy” model. If you have a problem in production, then fixing it requires shutting everything down, redeploying configurations, and then starting it back up. I really liked the visual flows that NiFi provides, and the ability to stop and start processors dynamically. Plus, NiFi has much deeper ability to track what is happening to your data, what they call data provenance. Hawt.io just doesn’t compare from a management perspective.

-

Simple to stand up. Unlike many of the various projects that fall under the Hadoop ecosystem, NiFi seems to be very self contained. My typical approach is to look at how complex the Dockerfile is for a project. The most popular one

mkobit/nifiis just a couple of commands, mostly around downloading the distribution. NiFi doesn’t depend on a lot of other Hadoop ecosystem. -

Simple data processing is best in NiFi. My test project is taking Powerpoint documents, and first extracting the text content, and then converting each slide to an image, and then OCR’ing that image. (This is to power a highlight on image search interface that is pretty awesome!) I want to replace my home grown data processing logic with NiFi. So what is slick is that it was super easy to stand up an

HTTPListenerthat actually receives my PPTX files! Passing in other metadata as part of the post to theHTTPListenerwas easy as well. However trying to send each .PPTX file over to my API for further processing using theHTTPPostturned out to be a bust. It wasn’t obvious how to connect things, though admittedly I am a newbie at this. I made progress with theInvokeHTTPprocessor, but still the exact wiring magic wasn’t obvious. However, if I am manipulating JSON or CSV data, then it’s much more native feeling set of tools for tweaking the data. -

For more complex processing, it looks like you can pretty easily create your own Processor, as a block of Java code that is packaged up as a NAR (nifi archive). I’m going to try out the OCR process as a NAR, and see how that goes.

-

MiNiFi is a light weight version of NiFi. Most (all?) of the power of NiFi, but without the UI layer. This is what you want to put on your cash register, or Raspberry PI system, and it comes in both a Java and a super compact C++ version. This made me think of Beats from Elastic.co. Beats ties into Logstash, and MiNiFI ties into NiFi. Logstash is great, but it’s devOps oriented, and doesn’t have the visual layer or the provenance tracking that NiFi has.

The next time I’m looking to move data around, and have a fairly flexible way of bringing data in from multiple sources, then I think NiFi has a lot to offer. I know a lot of folks like Talend, but it just feels heavy and kind of old. NiFi, while it’s been around for a while, still feels like it is at it’s growth stage in adoption and features. Plus the list of already supported processors is really impressive! Now if they can just make the connecting of processors a bit simpler and visual, and slightly less magic, then nirvana!

Want to dive deeper, checkout this awesome list of NiFi resources!